Analysis on Manifolds II: Exterior Algebra

Part II of a five-part lecture series on differential forms and the generalised Stokes theorem.

This lecture includes interactive SymPy cells that verify key results symbolically. SymPy is a Python library for symbolic mathematics. Loading it fetches a WebAssembly Python runtime (approx. 15 MB, cached after first load). You can also load it on demand from any code cell below.

0. Why algebra before forms

In Part I we built analysis on and identified the derivative of a map as a linear map . The next step is to ask: what is the right object to integrate over a -dimensional surface sitting inside ?

The object we integrate is a differential -form: at each point of the manifold, an alternating multilinear map that eats tangent vectors and returns a signed -volume. A scalar field will not do, because it carries no orientation and cannot distinguish a surface from its mirror image. Integration of forms produces signed quantities that transform correctly under reparametrisation and change of variables; a scalar integrand forces an absolute value on the Jacobian and loses the orientation data that drives Stokes' theorem.

Before we can talk about differential forms on manifolds (Part III), we need to understand the purely algebraic object that lives at a single point: the space of alternating -linear forms on a vector space . This is exterior algebra. It is linear algebra, nothing more, but the pieces of it that matter for calculus on manifolds are rarely taught in a linear algebra course.

This post builds exterior algebra from scratch. We start with dual spaces and multilinear maps, introduce the alternation operator, define the wedge product, compute the dimension of , recover the determinant as the unique top-degree form, and end with the interior product, the Hodge star, and the algebraic pullback. Everything here is intrinsic: no inner product is needed until Section 10, and no basis is privileged until we use one to compute. The development follows Spivak [1], Munkres [2], and Tu [3]; readers who want the purely algebraic viewpoint should consult Greub [4] and Lang [5].

1. The dual space

Throughout, denotes a finite-dimensional real vector space of dimension . We will eventually specialise to or, in Part III, to the tangent space of a manifold at a point.

1.0. Functionals and linear functionals

Before defining the dual space we pin down the word "functional," which will appear constantly.

A functional on is a map . No compatibility with the vector-space structure is assumed: can be continuous or discontinuous, smooth or not, bounded or unbounded. The set of all functionals on is the set of all real-valued functions on , and carries pointwise addition and scalar multiplication, making it a (hugely infinite-dimensional) real vector space.

A functional is linear if it respects the vector-space operations:

On :

- is linear.

- is a functional but not linear: .

- is a functional but not linear: unless .

- is a functional but not linear: . Every linear functional vanishes at the origin.

- is a functional but not linear: it is positively homogeneous but satisfies , violating linearity in the scalar .

Linearity is a severe restriction. The following proposition makes precise how severe.

Let have dimension with basis . Then:

- A linear functional is uniquely determined by the real numbers . Conversely, any choice of real numbers extends to a unique linear functional.

- A nonzero linear functional is surjective onto , its kernel is a linear subspace of codimension one (a hyperplane) in , and each level set is an affine translate of . In particular all level sets are mutually parallel.

Proof

(1). Any has a unique basis expansion . Linearity forces

So the values determine everywhere. Conversely, given scalars , the formula defines a map that is linear by inspection and satisfies . Uniqueness of the extension follows from the forced formula above.

(2). Suppose . Then for some . For any , set ; then , so is surjective onto .

For the kernel: by the rank-nullity theorem applied to , . Since is surjective, , so ; the kernel is a hyperplane.

For level sets: fix and pick any with (exists by surjectivity). For any ,

So , an affine translate of the hyperplane . Different values of give translates by different amounts along any vector not in ; all translates are parallel.

This remark unpacks the two parts of [linear-functional-rigid] (and the proof above it).

Part (1) of the proof showed that fixing the scalars determines everywhere by the forced formula . Consequently, linear functionals on an -dimensional space form an -dimensional space themselves: they are parameterised by scalars, which is a finite amount of data. General functionals form an infinite-dimensional space. Linearity cuts the function space down to something workable and coordinate-friendly.

Part (2) of the proof identified the level set as an affine translate of the kernel hyperplane. This is the geometric picture promised in the disambiguation of Section 2.1: a covector is visualised as an infinite stack of parallel hyperplanes (its level sets), and is the signed count of how many sheets crosses as one walks from the origin to . Generic functionals have arbitrary level sets (spheres, parabolas, disconnected pieces) with no such structure.

The pay-off in the rest of this post: every alternating -form is built from linear functionals by the tensor product and the alternator ([lambda-k-basis]), so the "finite data" property proved in part (1) propagates to all of . And in Part III, the derivative of a smooth function at a point will turn out to be a linear functional on the tangent space, the differential ; linear functionals are how calculus on manifolds connects back to linear algebra at each point.

1.1. The dual space

The dual space of is the vector space

of linear functionals on , with pointwise addition and scalar multiplication. Elements of are called covectors or 1-forms on .

If is a basis for , the functionals defined by

form a basis for . In particular, .

Proof

Linear independence. Suppose in . Applying both sides to gives

for every , so all .

Spanning. Given , set . For any , linearity of gives

On the other hand, using ,

The two right-hand sides agree for every , so .

The position of indices tracks transformation behaviour. Under a change of basis (with dual basis ), vector components transform contravariantly, , while covector components transform covariantly, . The Einstein summation convention (sum over any index repeated once up, once down) encodes exactly the pairings that are coordinate-independent.

On with the standard basis, the dual basis is , where extracts the -th coordinate. This is the algebraic skeleton of the differentials we will meet in Part III.

Fix bases of and of . A vector is represented by the column . A covector is represented by the row . The pairing is a matrix product,

Under a change of basis with matrix (so ), covector rows transform as , so that the scalar is invariant. Rows act on columns by matrix multiplication; the formal distinction between row and column is the index-position distinction of the previous remark.

The map sending to , where , is a canonical isomorphism for finite-dimensional .

Proof

By construction , the functional on that evaluates each at .

is linear on . For and scalars ,

So , and maps into the correct codomain.

is linear. For , scalars , and any ,

Since this holds for every , the functionals agree: in , which says .

Injectivity. Suppose in , meaning for every . Pick any basis of with dual basis . Writing , applying to gives

for every . Hence , so .

Surjectivity. By [dual-basis], , and by the same proposition applied to , . An injective linear map between finite-dimensional spaces of equal dimension is surjective (rank-nullity), so every element of is for a unique .

The identification is basis-free. The identification , by contrast, requires a choice of inner product (or basis), and is not canonical.

2. Multilinear maps

A map (where , factors) is -linear if it is linear in each slot separately: for every index , scalars , and vectors,

The space of such maps is denoted (the space of covariant -tensors on ).

By convention and .

Before defining on multilinear maps operationally, we describe the tensor product abstractly. The abstract version applies to any pair of real vector spaces; the operational version on is one of its realisations.

Let and be real vector spaces. The tensor product is a real vector space together with a bilinear map , , satisfying the universal property: for every real vector space and every bilinear map , there is a unique linear map with .

Equivalently, one may construct explicitly as the quotient of the free vector space on by the bilinearity relations

The symbols are called elementary tensors, or simple tensors. A general element of is a finite sum , not usually a single elementary tensor.

The universal property is the clean statement; the quotient is one model. Both say the same thing: is the "freest" space in which the operation is bilinear, and bilinear maps out of factor through it uniquely.

If and are finite-dimensional with bases and , then the elementary tensors form a basis of . Consequently .

Proof

Spanning. For any and , bilinearity of gives

So every elementary tensor lies in the span of , and finite sums of elementary tensors span .

Linear independence. Let and be the dual bases. The map , , is bilinear; by the universal property it factors as a linear map with . Suppose . Applying gives for every .

Take with standard basis . By [tensor-dim], is four-dimensional with basis . The element

is not an elementary tensor. If it were for some and , expansion would give coefficients , a rank-one matrix. But the coefficient matrix of is the identity, which has rank two. So lies in but is a sum of two elementary tensors, not one.

This illustrates a general point: the elementary tensors form a cone inside (the Segre cone), and most tensors live in its linear span rather than on the cone itself.

Take for a finite-dimensional . The universal property says is characterised by: any bilinear map from factors through it. The bilinear map lands in (bilinear forms on ). It extends to a linear map sending to the bilinear form , and a dimension count (both spaces have dimension , using [tensor-dim] on the left and [tensor-basis] on the right) shows this map is an isomorphism. More generally,

This identification is the content of the next definition: it realises on multilinear maps by the operational formula below.

Given and , define by

Under the identification of the previous example, this agrees with the abstract tensor product of [abstract-tensor-product].

If is the dual basis of , then the products , with , form a basis of . In particular, .

Proof

Spanning. Given , set

Define . For basis vectors ,

Two -linear maps that agree on all tuples of basis vectors agree on all of by multilinearity, so .

Linear independence. Suppose . Evaluating on gives by the same Kronecker-delta collapse.

The space is too large for our purposes. Most of its elements have no geometric meaning as volumes. We now restrict to the subspace that does.

2.1. Multilinear maps, tensors, and matrices

Three related words get conflated in practice. They are not interchangeable.

- A multilinear map is a function that is linear in each slot. This is a definition about behaviour, what the object does when fed vectors. No coordinates appear.

- A covariant -tensor is a multilinear map together with a declaration of the space it lives in, , and the rules for combining it with other tensors (, , , pullback). Within this post, "multilinear map on " and "covariant -tensor" name the same object from two viewpoints.

- A matrix is a rectangular array of numbers. A matrix represents a tensor only after a basis is fixed, and only cleanly for rank 2 (higher ranks need -dimensional arrays). Change the basis and the entries change, but the tensor is the same. The same cautionary note applies to vectors and covectors: a column of numbers represents a vector only relative to a chosen basis.

Each viewpoint answers a different question. The multilinear map answers "what does this object do geometrically?" The tensor answers "which space does it live in, and how does it combine with others?" The matrix answers "how do I compute with it in one particular basis?"

Geometric content by rank. The multilinear maps that arise in geometry come with pictures.

- A 1-tensor (covector ) measures a signed component: is the signed projection of onto one direction. Picture as an infinite stack of parallel level hyperplanes in ; the number counts how many sheets pierces, with sign.

- A symmetric 2-tensor (inner product ) measures lengths and angles: , and .

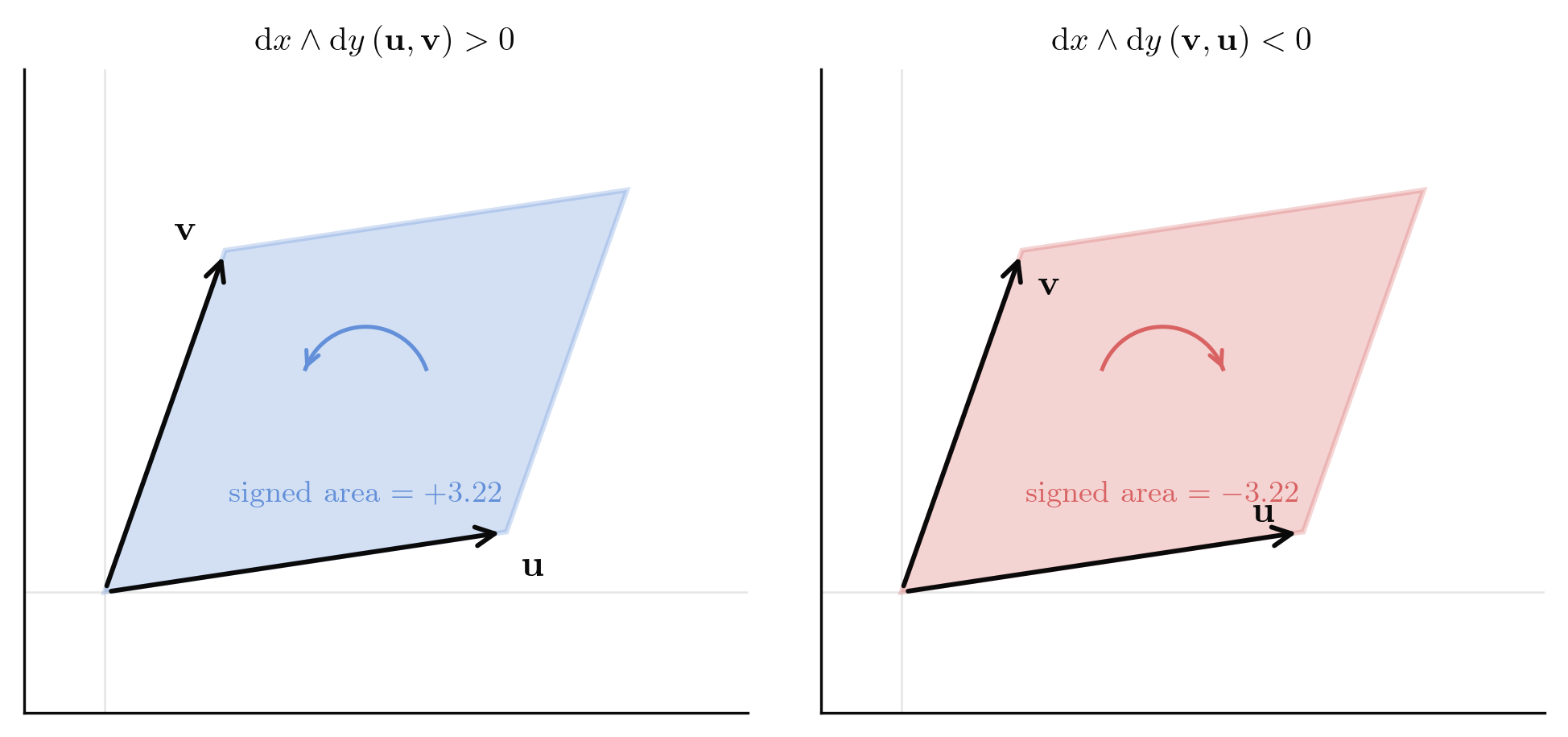

- An antisymmetric 2-tensor (2-form ) measures signed area: is the signed area of the parallelogram spanned by and , oriented by their order.

- An alternating -form measures the signed -volume of the parallelepiped spanned by its arguments ([alt-equiv](3)).

- A general, not-necessarily-symmetric, not-necessarily-alternating -tensor has no single geometric picture. It is a -linear weighted combination of its arguments' components. The useful geometric tensors are the ones with symmetry or antisymmetry; they occupy proper subspaces of , and the rest of this post concerns one of them, .

Matrix multiplication covers exactly one tensor operation. When are linear maps (rank- tensors, one upper and one lower index), the composition is realised by the matrix product . Every other tensor operation lies outside matrix algebra:

- The evaluation looks like three factors multiplied but is a -tensor eating two vectors, not a composition of linear maps.

- The wedge of two 1-forms is a 2-form with antisymmetric matrix; no product of row vectors and produces it.

- Contraction of a -tensor with a vector reduces the rank by one (Section 9). Matrix operations have no such degree-shifting machinery.

- For , the "matrix" of a -tensor is a -dimensional array; operations on it require index notation, not matrix notation.

Index position carries information that matrices hide. Upper (contravariant) and lower (covariant) indices obey different transformation laws under a change of basis. Matrix notation writes both as rows and columns and pretends they are interchangeable under transpose; they are not. An inner product (two lower indices) and a bilinear form on (two upper indices) both display as arrays but live in different tensor spaces. Going from one to the other requires the metric (index raising). The matrix representation forgets data that index notation preserves.

With that in mind, we record the representations. Fix with standard basis and dual basis .

Vectors and covectors. A vector is a column; a covector is a row:

A general 2-tensor. An element has nine independent components and acts as

The basis corresponds to the nine matrix units , confirming from [tensor-basis].

A symmetric 2-tensor. Symmetric tensors () form a 6-dimensional subspace; the inner product is the prototype. In the standard Euclidean case,

An alternating 2-tensor (a 2-form). For , the matrix is antisymmetric, so only the three entries above the diagonal are independent:

The three parameters recover from [lambda-k-basis]. The generic 2-tensor above decomposes as , the symmetric-plus-antisymmetric split that mirrors the direct-sum decomposition from Section 5 at the level of matrices.

A 3-tensor. An element of has components . Since a matrix is two-indexed we display the cube as three slices, one for each value of the first index:

The evaluation is

A 3-form on . The volume form has components , the Levi-Civita symbol. Written as three slices:

Six of the twenty-seven entries are nonzero (the six permutations of ), three with sign and three with sign . Every other is a scalar multiple of this, confirming .

Metric tensor in spherical coordinates. The Euclidean metric expressed in (with the polar angle):

The same symmetric 2-tensor has different matrix entries in different bases, obeying the covariant transformation law from the remark in Section 1.

3. Alternating -tensors

A -tensor is alternating if it changes sign whenever two of its arguments are swapped: for every and every ,

The space of alternating -tensors on is written . Its elements are called -forms (or -covectors) on .

For , the following are equivalent:

- is alternating.

- whenever two arguments are equal.

- whenever are linearly dependent.

Proof

(1) (2). Setting in the swap identity gives , hence on such tuples, so .

(2) (1). Fix indices and all other arguments. Define with in slot and in slot . Then is bilinear and vanishes on the diagonal by (2). Expand:

The first and last terms vanish by (2), leaving , which is the swap identity.

(2) (3). Suppose are linearly dependent, so one of them, say , is a combination . By multilinearity,

Each summand has a repeated argument ( appears twice), so it vanishes by (2). Hence the whole sum is zero.

(3) (2). If two arguments are equal, the tuple is linearly dependent.

Condition (3) is the geometric heart of the definition: an alternating -tensor measures a signed volume of the parallelepiped spanned by its arguments, and a degenerate parallelepiped (one in a proper subspace) has zero volume. Everything else in this post is a consequence of this.

4. Permutations and the sign

To compute with alternating tensors we need the symmetric group.

A permutation of is a bijection . The set of all such permutations is the symmetric group . A transposition is a permutation that swaps two elements and fixes the rest.

Every permutation is a product of transpositions. The sign is , where is the number of transpositions in any such factorisation. The sign is well-defined (independent of the factorisation) and multiplicative: .

We take the well-definedness of the sign as known from a first course in abstract algebra. A short proof uses the parity of the number of inversions of , where an inversion is a pair with .

A -tensor is alternating if and only if for every ,

Proof

(). Taking to be any transposition gives and hence the swap identity, so is alternating.

(). Assume is alternating. Write as a product of transpositions. Apply the swap identity once per transposition, starting from the rightmost: each application flips one sign. After applications,

5. The alternation operator

There is a canonical projection from onto .

For , define by

The operator is linear and satisfies:

- for every .

- If , then .

- (idempotent).

Proof

Linearity of is clear from the definition.

(1). Fix . Substituting in formula [eq:alt-def],

Reindex by ; the map is a bijection of , so the sum is unchanged except for sign:

using . Therefore

By [sigma-action], is alternating.

(2). If is alternating, [sigma-action] gives . Substitute into the definition:

(3). By (1), , so by (2), .

The normalisation is the "analyst's convention" (Spivak [1], Munkres [2]) and makes a projection. The algebraic literature (Greub [4], Lang [5]) often drops it; this changes constants in formulas for the wedge product but not the algebra.

Everything in this post assumes the base field is , where is invertible for every . The constructions carry over verbatim to any field of characteristic zero, and to characteristic for the degrees that appear. In characteristic the alternator has a denominator that is not invertible, and the exterior algebra must be built directly from the Grassmann relations instead. We will not need this level of generality for differential forms on real manifolds.

Degree 1. For , is trivial and . Every 1-form is already alternating.

Degree 2. For ,

In particular, for ,

Multiplying by gives the wedge as defined in Section 6.

Degree 3. For , with ,

The even permutations contribute and the three transpositions contribute .

For ,

Proof

Expand the definition:

The inner sum is the Leibniz formula for .

This is the key computation for Section 6: combined with bilinearity in and linearity of , it determines on all of , since the tensor products span by [tensor-basis].

The tensor space splits as

with the first summand equal to . Explicitly, every decomposes uniquely as

with and .

Proof

By [alt-properties](3), is an idempotent endomorphism of . Every idempotent on a vector space yields : for any , with and . Uniqueness: if with and , then , forcing .

By [alt-properties](1)-(2), , giving the claim.

A tensor lies in if and only if its signed symmetrisation vanishes. Every symmetric tensor ( for all ) lies in , since pairing sign-alternating coefficients against a fixed value sums to zero when (the signs cancel across versus ). The kernel is strictly larger than the symmetric subspace for : the -representation on decomposes into isotypic components indexed by the irreducible representations of , and is the projector onto the sign-isotypic component. For , the isotypic components other than sign and trivial are nonzero (their multiplicities are given by Schur-Weyl duality in terms of ), so strictly contains the symmetric tensors.

Write the signed permutation action . Then is the group average , which is the standard projection onto the sign-isotypic component of the -representation on . Nothing about this construction is special to vector spaces: the same averaging defines the alternator over any field of characteristic zero.

6. The wedge product

Assemble all tensor and alternating spaces into direct sums:

The bullet "" is shorthand for "all degrees at once." An element of is a finite formal sum with ; the integer is its degree. A map between graded spaces is graded of degree if for every .

The tensor product on does not preserve : if , the tensor is not alternating. We need a product that lives inside .

For and , the wedge product is

The combinatorial factor is chosen so that evaluates on basis vectors exactly as a determinant, with no extraneous factorials. We prove this in Section 7.

Before proving the wedge's structural properties we need a technical but reusable lemma.

For any and ,

In particular, if , then .

Proof

We prove the first equality; the second is symmetric. View as the subgroup of fixing . Expand:

For fixed , reindex the outer sum by ; since fixes slots , the -factor is unchanged, and . Each of the choices of produces the identical summand after reindexing. The inner normalisation cancels the copies, leaving

For , , :

- Bilinearity. and similarly in the second slot.

- Graded commutativity. .

- Associativity. , and both equal .

Proof

(1). Bilinearity follows from bilinearity of and linearity of .

(2). Let be the shuffle sending to , explicitly for and for . Each of the last arguments must be moved past each of the first arguments, giving transpositions, so . Directly from the definition of ,

Now apply . By the same reindexing argument used in [alt-properties](1), composing with a permutation multiplies by its sign:

Multiply both sides by and use the definition of the wedge:

(3). We compute and show it equals the triple expression. By definition,

The factorials collapse to . By [alt-swallow], . Hence

The identical calculation, applied on the other side with the second form of [alt-swallow], gives . The two agree.

If is odd and , then .

Proof

By graded commutativity with and odd,

using . Hence in the real vector space , so .

For even, can be nonzero. On a symplectic -manifold, the -fold wedge of the symplectic form is the volume form: this is the content of Liouville's theorem in classical mechanics.

A -shuffle is a permutation satisfying and . Let denote the set of all such. Then for and ,

Proof

Every decomposes uniquely as where , permutes the first slots, and permutes the last slots: the values of on form a -subset of (this determines ), and then record the internal orderings. So is partitioned into cosets of , each with a unique shuffle representative.

By alternation of and , each summand of labelled by equals times the summand for (the action reshuffles slots inside each of with the corresponding sign). Since , each coset contributes the shuffle term a total of times. Therefore

and multiplying by gives the claim.

The shuffle formula explains the combinatorial factor in [wedge]: the ratio counts the shuffles, equivalently the cosets . It is the standard working formula in differential geometry.

For , formula [eq:wedge-def] with gives , and

In particular, evaluated on and returns , the signed area of the parallelogram they span.

7. Basis and dimension of

Fix a basis of with dual basis . The natural alternating -tensors are wedges of distinct dual basis elements.

For any and any ,

Proof

By the iterated associativity formula in [wedge-properties](3),

Expand the right-hand side on using the definition of and :

The right-hand side is exactly the Leibniz formula for .

For strictly increasing multi-indices and ,

Proof

By [wedge-as-det], the left-hand side equals . If the matrix is the identity with determinant . If , then some is not in the (increasing) multi-index , so the -th row of is zero and the determinant vanishes.

For , the family

is a basis of . Consequently,

For , .

Proof

Write for an increasing multi-index , and .

Linear independence. Suppose . Evaluating on for an arbitrary increasing and applying [wedge-basis-evaluation],

Hence all .

Spanning. Given , set for each increasing , and define . By [wedge-basis-evaluation], for every increasing . We claim this forces .

Let be arbitrary. If two of the coincide, both and vanish on (they are alternating). Otherwise the entries are distinct; let be the permutation that sorts them into increasing order, with sorted multi-index . By [sigma-action],

So and agree on all tuples of basis vectors, and by multilinearity on all of . Hence lies in the span.

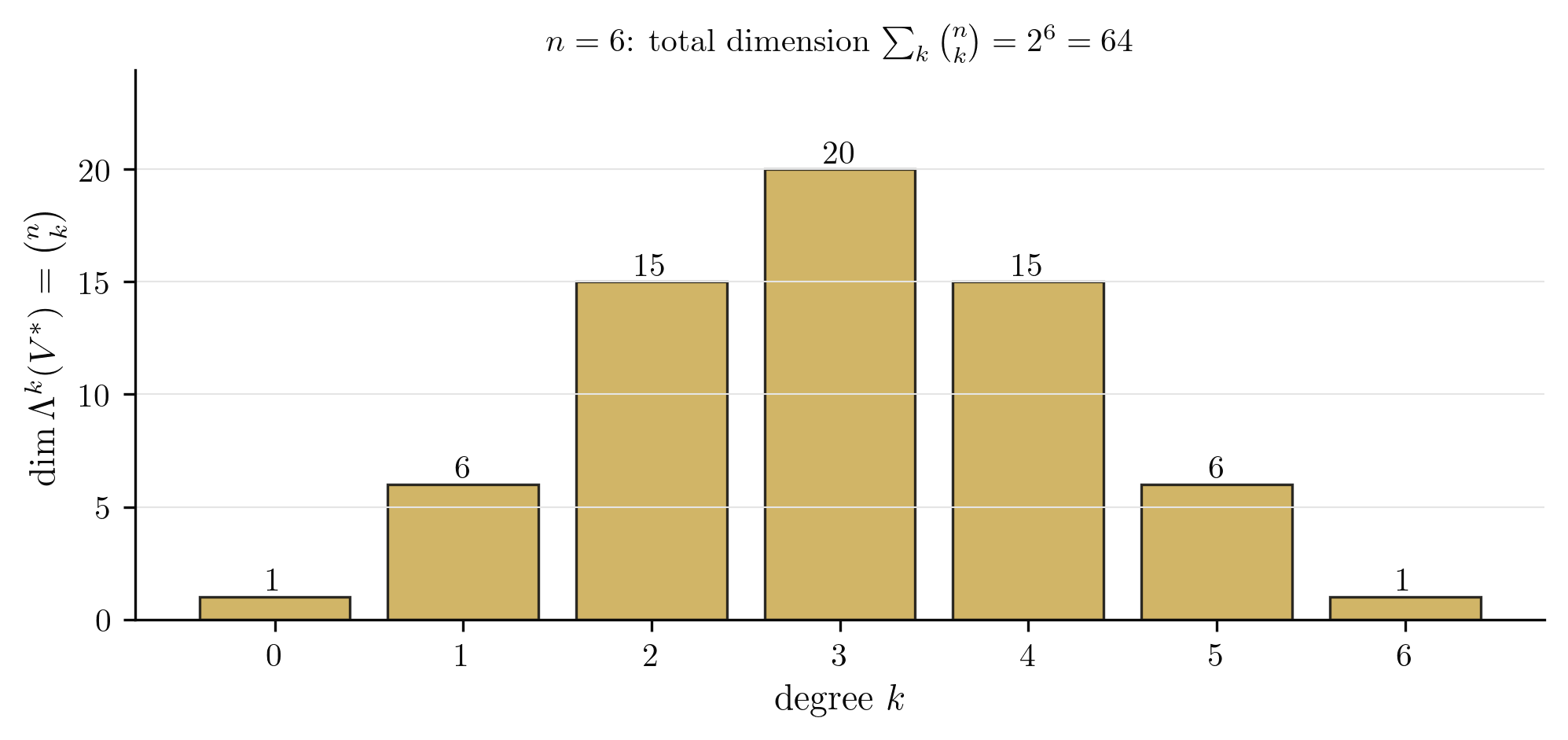

Dimension count. The number of strictly increasing -tuples from is , and the total sum is by the binomial theorem.

Vanishing for . Any vectors in an -dimensional space are linearly dependent, so every alternating -tensor vanishes by [alt-equiv](3).

The dimensions form the -th row of Pascal's triangle. They are symmetric: . This is the shadow of Hodge duality, which we preview in Section 10.

A -form is decomposable (or simple) if it can be written as a single wedge for some . For every form is decomposable, but for the decomposable forms cut out a proper subvariety of . Example: on with coordinates , the 2-form satisfies , while any decomposable 2-form has ; hence is not decomposable. The set of decomposable -forms (up to scale) is the image of the Plücker embedding of the Grassmannian into ; the Plücker relations (quadratic equations on coordinates) cut out this image.

from sympy import binomial

n = 6

dims = [binomial(n, k) for k in range(n + 1)]

print(f"n = {n}")

print(f"dim Lambda^k for k = 0..{n}: {dims}")

print(f"Sum (dim Lambda_bullet): {sum(dims)} = 2^{n} = {2**n}")

print(f"Symmetry: {dims == dims[::-1]}")Dimensions of the exterior algebra

8. Determinants as top forms

The space for is one-dimensional by Section 7. This single fact gives the determinant its intrinsic definition.

Let have dimension with basis and dual basis . The top-degree form satisfies

Consequently, for any linear map ,

Proof

Apply [wedge-as-det] with and :

since . This is the first statement.

For the second, take . The coordinates of in the basis are the entries of the matrix of : . So .

For linear maps , .

Proof

The pullback defined by lies in the one-dimensional space , so for a unique scalar . Evaluating on and using [det-as-form] gives . Then

Comparing coefficients of gives the claim.

Since is one-dimensional, any two nonzero top forms satisfy for a unique . The sign of partitions into two connected components, the two orientations of ; picking a nonzero selects one. A top form alone measures only volume ratios: for a parallelepiped spanned by , the number is meaningful only relative to a reference frame declared to have unit volume. The special linear group is the stabiliser of any such (the maps with ), and the full general linear group acts on through the one-dimensional representation . An inner product (Section 10) removes the scale ambiguity by declaring orthonormal frames to have unit volume, producing a canonical element .

from sympy import Matrix, symbols, expand, simplify

from itertools import permutations

# Leibniz formula via exterior-algebra top form on R^3.

a, b, c, d, e, f, g, h, i = symbols('a b c d e f g h i')

M = Matrix([[a, b, c], [d, e, f], [g, h, i]])

n = 3

total = 0

for sigma in permutations(range(n)):

inv = sum(1 for i in range(n) for j in range(i+1, n) if sigma[i] > sigma[j])

sgn = (-1) ** inv

term = sgn

for i in range(n):

term = term * M[sigma[i], i]

total += term

print("Leibniz sum :", expand(total))

print("M.det() :", expand(M.det()))

print("Match :", simplify(total - M.det()) == 0)Leibniz formula as the top-form evaluation

9. Interior product (contraction)

The wedge combines a -form and an -form into a -form. Contraction, also called the interior product, goes the other way. Feeding a single vector into the first slot of a -form produces a -form that remembers the remaining slots. If you have seen partial function evaluation, fixing one argument of to get a function of , contraction is that same idea applied to an alternating multilinear map.

The smallest nontrivial case lives in . The volume form eats three vectors and returns a signed volume. Feed it a single vector : the result eats two more vectors and returns a number, so it is a 2-form. We will derive (later in this section) the formula

Integrating this 2-form over an oriented surface returns the flux of the vector field through . Contraction is the algebraic operation sitting underneath flux integrals.

Two notations coexist in the literature and mean the same thing: the Greek letter (common in analysis, differential geometry, and physics) and the hooked wedge (common in algebraic geometry). We use .

For and with , the interior product is

For , set . The interior product is also written .

If , then .

Proof

Multilinearity of in its arguments is inherited from multilinearity of . For alternation, swap arguments and with :

since is alternating in its last slots. The right-hand side is .

The interior product satisfies:

- is linear in both and .

- .

- Graded Leibniz rule. For and ,

Proof

(1). Immediate from the definition.

(2). For with and any ,

because has two equal arguments and is alternating ([alt-equiv]). For , and the convention gives ; for both applications are zero by convention.

(3). We first record a useful formula. For , cofactor expansion of [eq:wedge-as-det-formula] along the column for gives

where the hat denotes omission of the -th factor.

Both sides of the Leibniz claim are bilinear in , and every element of (resp. ) is a linear combination of decomposable wedges by [lambda-k-basis]. So it suffices to verify on and .

Applying [eq:iota-decomp] to the -fold wedge ,

The factor in the second sum splits off a . Applying [eq:iota-decomp] separately to and ,

Adding the first and times the second recovers term-for-term.

Let and . Using the graded Leibniz rule on ,

This is the classical identification of a vector with a 2-form in , which is how gets encoded when we move to differential forms in Part III.

10. Inner product and the Hodge star

Up to this point everything has been intrinsic: no metric, no basis privileged. An inner product on gives extra structure, including the Hodge star isomorphism .

Let be a positive-definite inner product with orthonormal basis . Define an inner product on by declaring the family orthonormal. Equivalently, for decomposable forms,

where is the inner product on dual to .

Fix an orientation on (a choice of nonzero element of up to positive scaling) and let be the associated unit volume form. The Hodge star is the unique linear map satisfying

For an increasing multi-index , let denote the increasing complementary multi-index, and define

the sign of the permutation listing followed by . Then

Proof

Write and similarly . The defining relation [eq:hodge-star-def-eq] is linear in , so is determined once we know for every increasing of length .

Take and expand over increasing -multi-indices . On one hand,

On the other, distributing the wedge,

The wedge vanishes unless the sets and are disjoint and cover (any repeated factor sends the wedge to zero). Since is increasing, it is uniquely determined as in that case, and . Hence

Comparing the two expressions, for every increasing of length . Setting gives (using ); for , . Since is a bijection on increasing multi-indices of complementary lengths, this fixes every , giving . Linear extension then determines on all of .

On with a positive-definite metric, .

Proof

By linearity it suffices to check on the basis of [hodge-basis]. Applying twice,

The permutation sending is the cyclic swap of two blocks of sizes and , which is a product of transpositions (same shuffle argument as in [wedge-properties](2)). Hence .

In with the standard metric and orientation:

The star identifies 1-forms with 2-forms in , which is the algebraic reason that the cross product exists in exactly three dimensions and nowhere else. In Part III we will see that this same isomorphism converts and into the exterior derivative.

11. Pullback of alternating forms

A linear map does not in general push forms forward, but it does pull them back.

Let be linear and . The pullback is

The pullback satisfies:

- Linearity. and .

- Product compatibility. .

- Functoriality. and .

- Top-degree scaling. If and , then .

Proof

(1). For any ,

Scalar compatibility is identical.

(2). Both sides are bilinear in (by (1) and bilinearity of ), so it suffices to check on decomposable forms and . Note first that for , . By [wedge-as-det],

where . Since ,

applying [wedge-as-det] in the other direction. The right-hand side equals .

(3). For , , and ,

The identity case is immediate.

(4). Since is one-dimensional, for a scalar depending only on . Take and evaluate on :

by [det-as-form], while the right-hand side is . Hence .

Property (4) is the algebraic fossil of the change-of-variables theorem in multivariable integration. When we integrate an -form over a region and pull it back along a diffeomorphism, the Jacobian determinant appears automatically: no absolute value, because the orientation is built into the form. This is why forms are the right objects to integrate. We will develop this fully in Part IV.

Fix bases of and of , with dual bases and . Let have matrix with . On 1-forms, , so the matrix of (as rows act on rows) is .

On higher-degree forms, property (2) of [pullback-properties] and the determinant formula from [wedge-as-det] give

The matrix of in the ordered bases indexed by increasing multi-indices is the matrix of minors of : entry is with rows indexed by and columns by . This matrix is the -th exterior power , where and . Cauchy-Binet is the identity at the level of minor matrices, which is a coordinate statement of functoriality from [pullback-properties](3).

For , the minor matrix has a single entry equal to , recovering from part (4).

from sympy import Matrix, symbols, simplify

# Verify pullback of a top form: A^*(omega_0) = det(A) * omega_0.

a11, a12, a13, a21, a22, a23, a31, a32, a33 = symbols('a11 a12 a13 a21 a22 a23 a31 a32 a33')

A = Matrix([[a11, a12, a13], [a21, a22, a23], [a31, a32, a33]])

e = [Matrix([1, 0, 0]), Matrix([0, 1, 0]), Matrix([0, 0, 1])]

cols = [A * ej for ej in e]

pulled_back_value = Matrix.hstack(*cols).det()

print("A^*(omega_0)(e1, e2, e3) =", simplify(pulled_back_value))

print("det(A) =", simplify(A.det()))

print("Equal:", simplify(pulled_back_value - A.det()) == 0)Pullback of a top form scales by det

12. Looking ahead

Everything in this post is purely algebraic: is a single vector space, not a manifold, and is a finite-dimensional real vector space. In Part III we will glue a copy of to every point of a smooth manifold and ask for smooth sections: these are differential -forms on . Bott and Tu [6] is the standard reference for the topological perspective that follows. The wedge product and the interior product will extend pointwise. The pullback will extend along smooth maps. The Hodge star will need a Riemannian metric and will give us on each fibre.

The one genuinely new operation in Part III, the one that does not live at a single point, is the exterior derivative . It is characterised by linearity, the graded Leibniz rule, , and its action on functions (where it is the ordinary differential). Everything we have built here, the algebra of the wedge and the sign of , is what makes a triviality rather than a theorem.

With in hand, the classical identities

will both be the single equation , read through the Hodge star of Section 10. Integration of forms (Part IV) and Stokes' theorem (Part V) will follow.

References

- M. Spivak, Calculus on Manifolds: A Modern Approach to Classical Theorems of Advanced Calculus, W.A. Benjamin, 1965. Chapter 4. DOI

- J.R. Munkres, Analysis on Manifolds, Addison-Wesley, 1991. Chapters 6 and 7. DOI

- L.W. Tu, An Introduction to Manifolds, 2nd ed., Springer, 2011. Chapter 1, Sections 3 to 4. DOI

- W. Greub, Multilinear Algebra, 2nd ed., Springer, 1978.

- S. Lang, Algebra, 3rd ed., Springer, 2002. Chapter XIX (exterior algebra).

- R. Bott and L.W. Tu, Differential Forms in Algebraic Topology, Springer, 1982. Chapter 1.