Analysis on Manifolds I: Analysis on

Part I of a five-part lecture series on differential forms and the generalised Stokes theorem.

This lecture includes interactive SymPy cells that verify key results symbolically. SymPy is a Python library for symbolic mathematics. Loading it fetches a WebAssembly Python runtime (approx. 15 MB, cached after first load). You can also load it on demand from any code cell below.

0. Why this series exists

The standard calculus sequence teaches a collection of computational techniques: differentiate polynomials, integrate by parts, compute a line integral, apply Green's theorem. These techniques work, and they produce correct answers. But they hide the geometry. The divergence theorem in , Green's theorem in , and the fundamental theorem of calculus on appear to be three unrelated results. They are not. They are one theorem, applied to differential forms of degree 0, 1, and 2.

The operators , , and that appear throughout multivariable calculus are likewise not three separate objects. They are the same operator, the exterior derivative , acting on forms of different degrees. The identity that students are asked to verify by computation is the single equation , which is immediate from the definition. The fact that it requires a computation to verify in standard notation is a failure of the notation, not a depth of the mathematics.

This series builds the machinery to see that unity. We start from the topology of and the derivative as a linear map (this post), move through exterior algebra (Part II) and differential forms on manifolds (Part III), develop integration of forms (Part IV), and arrive at the generalised Stokes theorem (Part V):

along with its classical special cases as corollaries.

We assume nothing beyond the concept of a function and basic arithmetic. We do not assume knowledge of differentiation, integration, or linear algebra. Everything is built from scratch.

1. as a metric space

1.1. Euclidean space

We begin with the space we will work in throughout this post.

For any positive integer , the -dimensional Euclidean space is the set of all ordered -tuples of real numbers:

An element is called a point. The numbers are its coordinates.

is the real line. is the coordinate plane. is the space of everyday geometry.

We called elements of "points," but we will also add them, subtract them, and scale them, which is the language of vectors. This is deliberate. As a bare set, has no algebraic structure: its elements are points, locations in space, with no distinguished origin and no notion of addition. But also carries the structure of a vector space (with componentwise addition and scalar multiplication), and it carries a metric (the Euclidean distance we define below). The same ordered -tuple plays both roles simultaneously.

In linear algebra and physics courses, textbooks often insist on a sharp distinction: "a point is a location, a vector is a displacement." On a general manifold, this distinction is essential, because the tangent space at a point (where vectors live) is a genuinely different space from the manifold itself (where points live). But in , there is a canonical identification: the vector from the origin to the point is , and conversely every vector determines a unique point. This identification is possible precisely because is both a vector space and a metric space, with a global coordinate system and a distinguished origin.

Throughout this post, we will freely treat elements of as both points (in definitions of open sets, convergence, and continuity) and vectors (when we add, subtract, or take norms). The notation serves both purposes. In Part III, when we move to manifolds, this conflation will no longer be available, and the distinction will become unavoidable.

Before we equip with any geometric structure, we need the language of set operations. These will appear constantly in what follows.

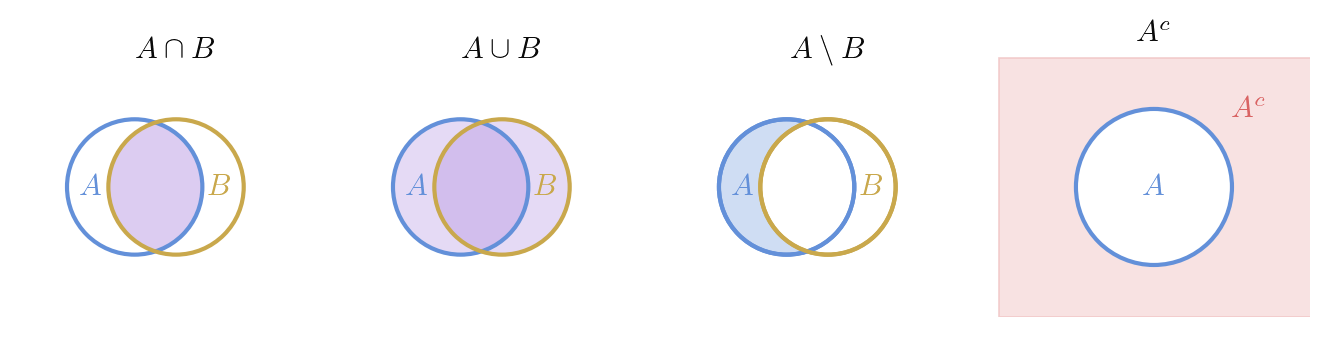

Let and be subsets of some ambient set . We define:

- Union: .

- Intersection: .

- Set difference: .

- Complement: .

- Subset: means every element of belongs to .

- Proper subset: means and .

In topology, we frequently take unions and intersections over families of sets, not just pairs. The notation generalizes as follows.

Let be a collection of subsets of indexed by some set . We define:

The index set can be finite, countably infinite, or uncountable.

De Morgan's laws extend to arbitrary families: and . These identities convert statements about unions into statements about intersections and vice versa, and will be essential when we prove that intersections of closed sets are closed.

To do analysis, we need a notion of distance. This is what separates a set from a space.

The Euclidean norm (or length) of a point is

The Euclidean distance between two points is

The pair is a metric space: the function is non-negative, symmetric, zero only when , and satisfies the triangle inequality . We will not prove the triangle inequality here (it follows from the Cauchy-Schwarz inequality), but we will use it freely.

1.2. Open balls and open sets

The central objects of topology are open sets. We build them from open balls.

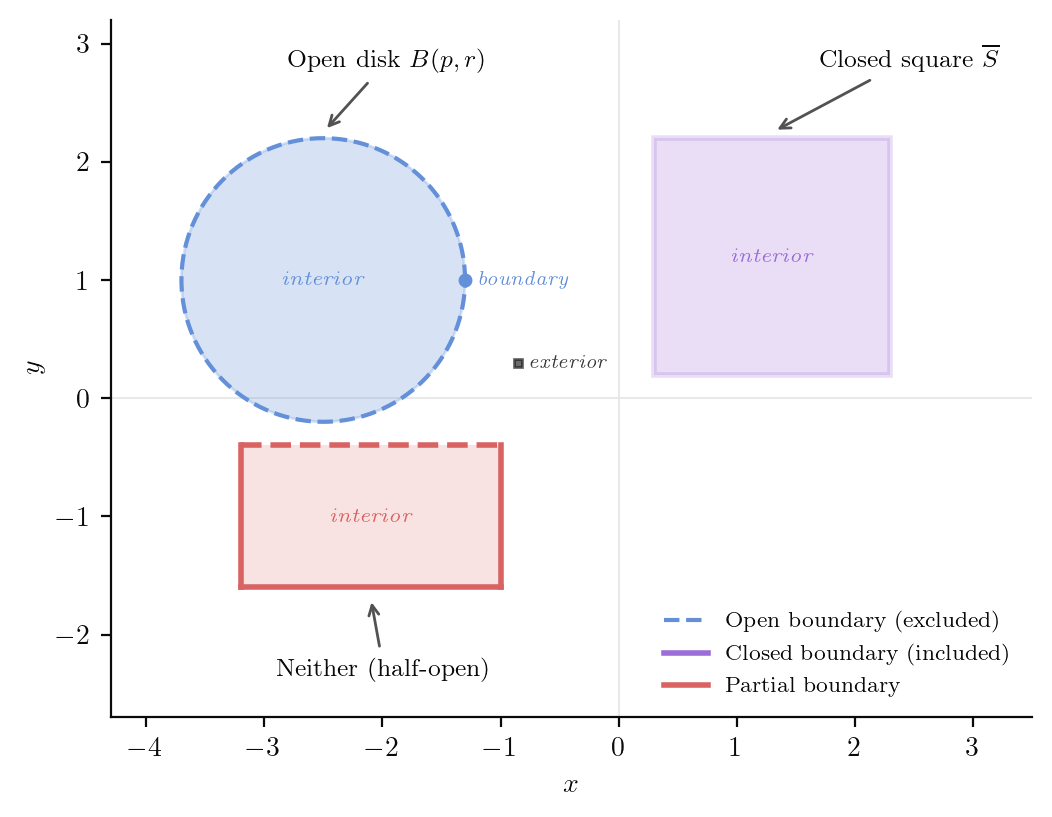

Let and . The open ball of radius centred at is

In , this is the open interval . In , it is the interior of a disk. In , the interior of a sphere.

Drag the gold point inside each region above. The blue disk is the largest open ball centred at that point fitting entirely inside the set. The ball shrinks as the point approaches the boundary, which is precisely the content of "openness": at every interior point, you can fit an open ball.

A subset is open if for every , there exists such that .

In words: is open if every point in has some breathing room. No point in an open set sits on its edge. This is exactly what the visualisation above demonstrates: at every interior point, there is a positive-radius ball that stays inside the set.

Misconception from school calculus. Students often learn that "open" means "endpoints not included," as in the interval . This is correct for subsets of , but it captures only a shadow of the real definition. The set is open in . It has no "endpoints," but the definition above applies: every point inside the unit disk has a positive-radius ball fitting inside it. The topological definition subsumes the interval-based intuition.

Every open ball is an open set.

Proof idea. Given a point inside the ball, the ball around it with radius equal to the gap fits inside.

Proof. Let . Set . We claim . For any ,

so .

- and are open.

- The intersection of finitely many open sets is open.

- The union of any collection of open sets (finite, countable, or uncountable) is open.

Proof. (1) Every point of has an open ball around it (take any ). The empty set is vacuously open.

(2) Let be open and let . For each , there exists with . Set . Then for all , so .

(3) Let be a collection of open sets and let . Then for some , so there exists with .

Property (2) requires finiteness. The intersection is a single point, which is not open in .

1.3. Closed sets, interior, closure, boundary

A subset is closed if its complement is open.

The closed ball is closed. "Closed" and "open" are not opposites: a set can be both (like itself), or neither (like in ).

Let .

- The interior is the largest open set contained in : the union of all open sets .

- The closure is the smallest closed set containing : the intersection of all closed sets .

- The boundary .

For the open ball in : , , and is the unit circle.

A set is open if and only if .

Proof. If is open, then is an open set contained in , so . Since by definition, equality holds. Conversely, if , then is a union of open sets, hence open.

from sympy import *

# Verify: a point at distance d from center of B(0,1) has max ball radius 1-d

d = Rational(3, 4)

r_max = 1 - d

print(f"Point at distance {d} from center of B(0,1)")

print(f"Max open ball radius: {r_max}")

print(f"Point + ball stays inside: {d + r_max} <= 1 is {d + r_max <= 1}")

# The point (3/4, 0) is interior: ball of radius 1/4 fits

x = Matrix([Rational(3, 4), 0])

print(f"\nPoint x = {x.T}")

print(f"||x|| = {x.norm()} < 1, so x is in B(0,1)")

print(f"B(x, {r_max}) ⊆ B(0,1) ✓")Verification: interior point and maximal open ball

2. Convergence and continuity

2.1. Sequences and limits

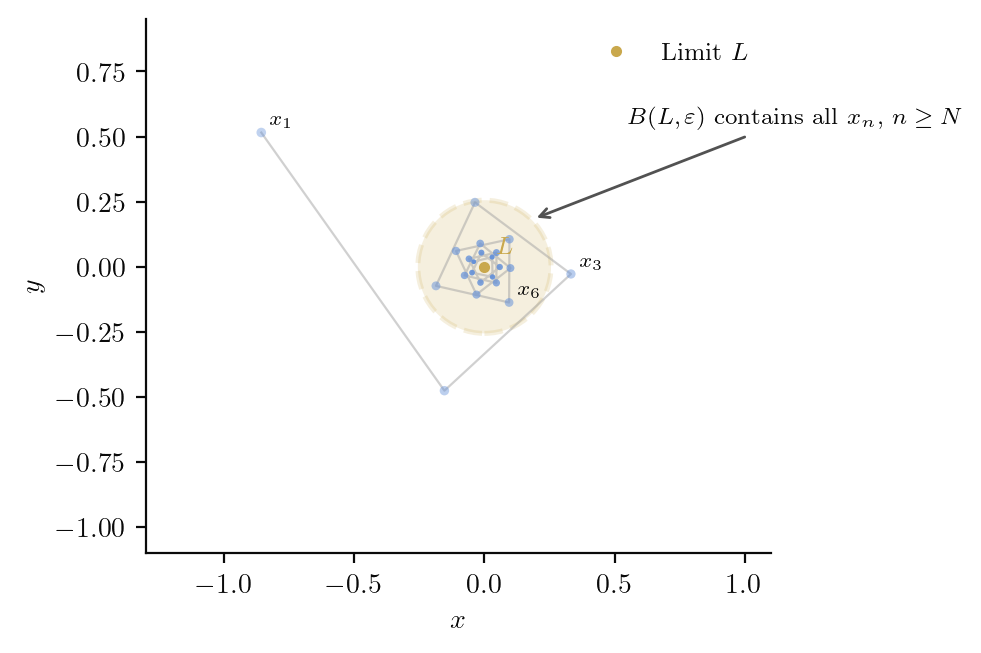

A sequence in converges to a point , written , if for every there exists such that

This says the tail of the sequence eventually stays inside every open ball centred at , no matter how small.

A sequence in converges to if and only if each coordinate sequence converges: for .

Proof. () Since , convergence of the full sequence implies convergence of each coordinate.

() Given , for each choose so that implies . Set . For ,

A set is closed if and only if every convergent sequence in has its limit in .

Proof idea. If the complement is open, a limit point outside would have a ball missing all sequence terms, contradicting convergence. Conversely, if the complement is not open, we build a sequence converging to a point outside .

Proof. () Suppose is closed and is a sequence in with . If , then , which is open, so there exists with . But means for large , contradicting .

() Suppose every convergent sequence in has its limit in . We show is open. Let . If no ball were contained in , then for each there exists , giving with , so by hypothesis, a contradiction.

2.2. Continuous functions

A function is continuous at if for every there exists such that

We say is continuous if it is continuous at every point.

What this really says. The - definition quantifies a single idea: small inputs produce small outputs. The order of quantifiers matters. The comes first (the adversary picks how accurate they want the output), then responds (we find an input tolerance that achieves it). If the input tolerance can always be found, the function is continuous.

A function is continuous if and only if for every open set , the preimage is open in .

Proof idea. The -ball around is inside ; the -ball around is inside .

Proof. () Let be open and . Then , so there exists with . By continuity at , there exists with . Thus , so is open.

() Given and , the open ball is open in , so is open and contains . Thus there exists with , which says .

This characterization is the one that generalizes: in an arbitrary topological space, where there is no metric and no -, continuity is defined as "preimages of open sets are open."

from sympy import *

# Verify epsilon-delta continuity for f(x) = x^2 at a = 3

# We need |x^2 - 9| < epsilon whenever |x - 3| < delta

# |x^2 - 9| = |x - 3| |x + 3|

# If |x - 3| < 1, then 2 < x < 4, so |x + 3| < 7

# So |x^2 - 9| < 7 * delta. Choose delta = min(1, epsilon/7).

x, eps = symbols('x epsilon', positive=True)

a_val = 3

f = x**2

# At delta = min(1, eps/7), the bound is:

delta = Min(1, eps / 7)

# If |x - 3| < delta <= 1, then |x + 3| < 7, so |f(x) - f(a)| < 7*delta <= eps

bound = 7 * delta

print(f"f(x) = x^2, a = 3")

print(f"delta = min(1, epsilon/7)")

print(f"|f(x) - f(a)| < 7 * delta = {simplify(bound)}")

print(f"For epsilon = 0.01: delta = {delta.subs(eps, Rational(1, 100))} = {float(delta.subs(eps, Rational(1, 100))):.6f}")

print(f"Bound: {float(bound.subs(eps, Rational(1, 100))):.6f} <= 0.01 ✓")Verification: epsilon-delta continuity

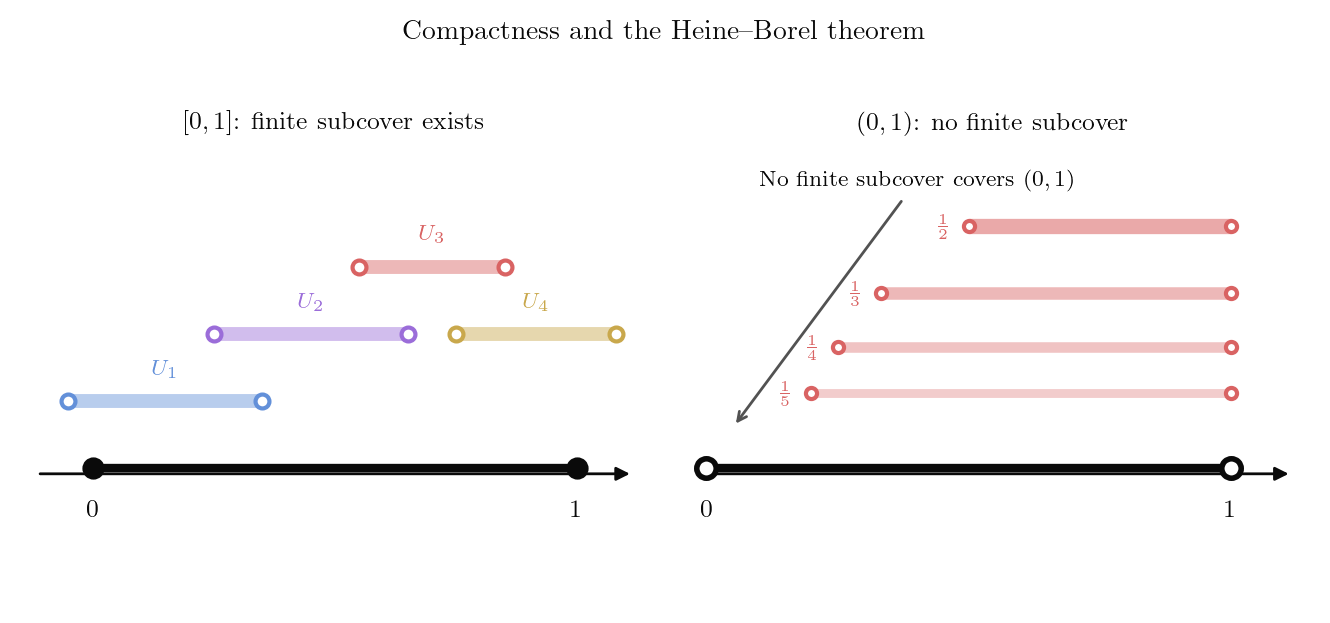

3. Compactness

Compactness is the property that makes optimization, approximation, and uniform estimates possible. Many theorems that "obviously should be true" (continuous functions on closed intervals attain their maximum) are actually consequences of compactness.

3.1. Open covers and the Heine-Borel theorem

Let . An open cover of is a collection of open sets such that .

A set is compact if every open cover of has a finite subcover: there exist finitely many indices such that .

The definition quantifies over all open covers, which makes compactness powerful but hard to verify directly. The Heine-Borel theorem gives a concrete characterization in .

A subset is compact if and only if it is closed and bounded.

Proof idea. The forward direction is straightforward: compactness implies bounded (cover by balls of radius 1) and closed (limits of sequences must land in ). The reverse direction is the hard part: we use the nested interval lemma.

Proof.

(, compact implies bounded.) The collection is an open cover of . By compactness, finitely many balls cover , so where . Thus is bounded.

(, compact implies closed.) Suppose . For each , let and set . Then is an open cover of . Extract a finite subcover . Set . One can verify that is disjoint from , so is in the interior of . Since this holds for every , the complement is open, so is closed.

(, closed and bounded implies compact.) We prove this for first. Let be closed, and let be an open cover. Define

We have (since implies for some , and otherwise needs no covering). Let . We claim . If , then (or , but in both cases), some contains and also contains a neighbourhood , so , contradicting .

For general , use the fact that a closed and bounded subset of is contained in a product of closed intervals, and apply the one-dimensional result inductively.

3.2. Consequences of compactness

A set is sequentially compact if every sequence in has a subsequence that converges to a point in .

In , a set is compact if and only if it is sequentially compact.

Proof. By Heine-Borel, compact in means closed and bounded. A bounded sequence has a convergent subsequence (Bolzano-Weierstrass). If is also closed, the limit lies in . Conversely, sequential compactness implies bounded (otherwise build a sequence with , which has no convergent subsequence) and closed (limits of convergent sequences lie in ).

If is continuous and is compact, then attains its maximum and minimum on .

Proof. The image is compact in (the continuous image of a compact set is compact). Hence is closed and bounded. Since is bounded, is finite. Since is closed, , so for some . Similarly for the minimum.

Why compactness matters. The function on the open interval has but never attains it. The extreme value theorem fails because is not compact. Compactness is what converts a supremum into a maximum.

4. Connectedness

A subset is connected if there do not exist two nonempty open sets with , , , and .

Equivalently, cannot be written as the union of two nonempty disjoint sets, each open relative to .

A set is path-connected if for any two points , there exists a continuous function with and .

Every path-connected set is connected.

Proof. Suppose is path-connected but not connected. Then there exist disjoint relatively open sets with , both nonempty. Pick , , and let be a path from to . Then and are disjoint open subsets of covering , with and . But is connected (it is an interval), a contradiction.

The converse is false in general (there exist connected sets that are not path-connected), but in every open connected set is path-connected.

If is continuous and , then there exists with .

Proof. The interval is connected (it is path-connected: use the linear path). The continuous image of a connected set is connected. So is a connected subset of , hence an interval. Since and are in this interval and lies between them, .

The school calculus version. Students are typically told that the IVT is "obvious" because "a continuous function can't jump over a value." The proof above makes the actual content precise: it is a consequence of the fact that intervals are connected and continuous images of connected sets are connected. Nothing about "not jumping" is needed.

5. The derivative as a linear map

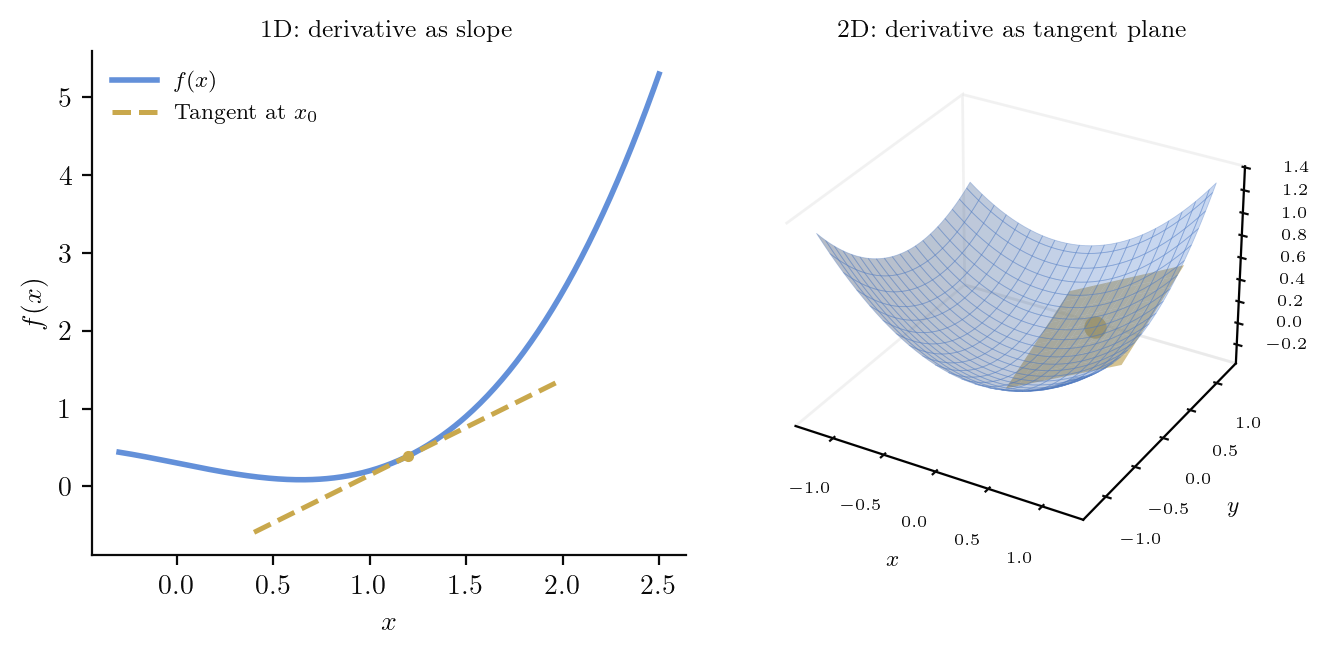

This is the conceptual heart of Part I. In school calculus, the derivative of at a point is a number: the slope of the tangent line. In higher dimensions, the derivative of at is not a number. It is a linear map.

5.1. The definition

Let be open and . We say is differentiable at if there exists a linear map such that

The linear map , when it exists, is called the derivative (or total derivative, or differential) of at , and is written or .

Read [eq:derivative-def] carefully. It says that with an error that vanishes faster than . The linear map is the best linear approximation to near . In one dimension, , and the definition reduces to the usual one. But in higher dimensions, is a matrix-valued object: it takes a vector and returns a vector .

Misconception: "the derivative is a matrix." The Jacobian matrix is the matrix representation of in the standard bases. The derivative itself is a linear map, a geometric object that exists independently of any choice of coordinates. When we write , we are choosing bases and representing the linear map as a matrix. This distinction becomes critical on manifolds, where there is no canonical basis.

The visualisation above shows the complex squaring map . Toggle between the function, its linearisation , and the error. As you zoom in (increase the slider), the error vanishes, confirming that the linear map is the best local approximation.

5.2. Uniqueness and consequences

If is differentiable at , then is unique.

Proof. Suppose and both satisfy [eq:derivative-def]. Then for any ,

as . Writing for a unit vector and , we get as , so for every unit vector . By linearity, .

If is differentiable at , then is continuous at .

Proof. We have where . Since is linear (hence continuous), both and as , so .

The converse is false: is continuous at but not differentiable there.

5.3. The Jacobian matrix

If is differentiable at , the Jacobian matrix of at is the matrix

that represents in the standard bases: .

6. The chain rule

Let and be open. If is differentiable at and is differentiable at , then is differentiable at and

In terms of Jacobian matrices: .

Proof idea. Both and are well-approximated by their derivatives. The composition of two linear approximations is a linear approximation to the composition.

Proof. Write , , . We need to show

Set , so . Then

where as . Also where . Therefore

Dividing by : the first term is . For the second, note , so is bounded, and since as (because is continuous at ).

What the chain rule really says. The derivative of a composition is the composition of the derivatives. In one dimension this is , where the "multiplication" is scalar multiplication. In higher dimensions, the "multiplication" is composition of linear maps (i.e., matrix multiplication). The chain rule for is a degenerate special case.

Let be and be . Then

At the point , , and

Alternatively, , so . The two methods agree.

from sympy import *

x, y = symbols('x y')

# f: R^2 -> R^2, the complex squaring map

f1 = x**2 - y**2

f2 = 2*x*y

# g: R^2 -> R, the squared norm

u, v = symbols('u v')

g_expr = u**2 + v**2

# Jacobians

Jf = Matrix([[diff(f1, x), diff(f1, y)],

[diff(f2, x), diff(f2, y)]])

Jg = Matrix([[diff(g_expr, u), diff(g_expr, v)]])

print("J_f(x,y) =", Jf)

print("J_g(u,v) =", Jg)

# Chain rule at (1, 1)

pt = {x: 1, y: 1}

f_at_pt = (f1.subs(pt), f2.subs(pt))

print(f"\nf(1,1) = {f_at_pt}")

Jf_at = Jf.subs(pt)

Jg_at = Jg.subs({u: f_at_pt[0], v: f_at_pt[1]})

product = Jg_at * Jf_at

print(f"J_g(f(1,1)) * J_f(1,1) = {Jg_at} * {Jf_at} = {product}")

# Direct computation

h = (f1**2 + f2**2).expand()

Jh = Matrix([[diff(h, x), diff(h, y)]])

print(f"\nDirect: (g∘f)(x,y) = {h}")

print(f"J_(g∘f)(1,1) = {Jh.subs(pt)}")

print(f"\nChain rule verified: {product == Jh.subs(pt)} ✓")Verification: chain rule for complex squaring composed with squared norm

7. Partial derivatives and functions

7.1. Partial derivatives

Let where is open. The partial derivative of with respect to at is

where is the -th standard basis vector.

The partial derivative is just the ordinary derivative of the function at . It measures the rate of change of when only the -th coordinate varies.

A common misconception. The existence of all partial derivatives at a point does not imply differentiability there. The function (extended by ) has both partial derivatives at the origin equal to zero, but is not even continuous at the origin (approach along to get ).

If is differentiable at , then all partial derivatives exist and the -entry of the Jacobian matrix is .

Proof. is the -th column of the Jacobian, and its -th component is .

7.2. Continuously differentiable functions

A function is of class (or continuously differentiable) if all partial derivatives exist and are continuous on .

More generally, is of class if all partial derivatives up to order exist and are continuous. is (or smooth) if it is for every .

If is at , then is differentiable at .

Proof idea. Apply the mean value theorem to each component along coordinate directions, then use continuity of the partial derivatives to control the error.

Proof (for , ). Write . Then

By the mean value theorem applied to the first variable, the first bracket equals for some between and . Similarly, the second bracket equals for some . Therefore

Each bracketed factor tends to 0 as (by continuity of the partials), and , so the whole expression is .

7.3. Clairaut's theorem

If is , then for all ,

Proof. We prove it for , , . Define

Applying the mean value theorem to :

for some between and . Applying the MVT again to the bracket:

for some between and . Repeating the argument in the other order (first in , then in ) gives

for some . Dividing both expressions by and sending , using continuity of the second-order partials: .

8. The inverse function theorem

8.1. The contraction mapping principle

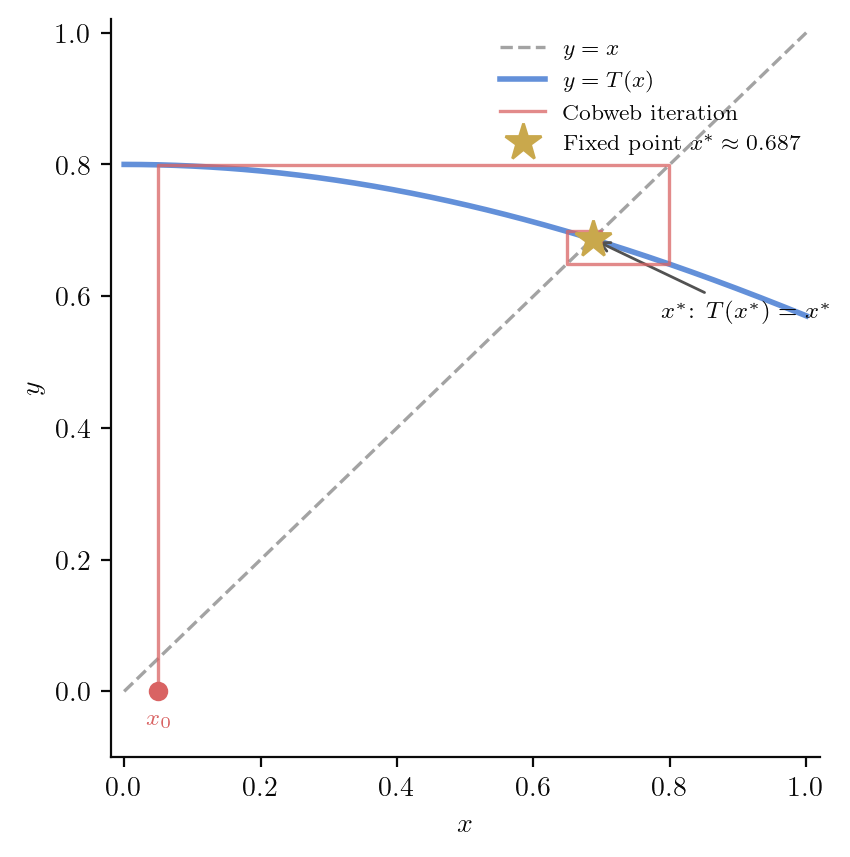

The inverse function theorem is proved using a fixed-point argument. We need:

Let be a metric space. A function is a contraction if there exists such that

for all .

If is a complete metric space and is a contraction with constant , then has a unique fixed point .

Moreover, for any starting point , the iterates converge to , and .

Proof. Existence. Pick any and set . By induction, . For ,

as , so is Cauchy. Since is complete, for some . By continuity of (contractions are Lipschitz, hence continuous), .

Uniqueness. If and , then . Since , this forces .

8.2. The inverse function theorem

Let be open and be . If is invertible (i.e., ), then there exist open sets and such that is a bijection with inverse.

Proof. By composing with the linear map , we may assume (the identity). Define , so . Since is , the entries of are continuous, so there exists such that for .

By the mean value inequality, for . In particular, is a contraction on .

Surjectivity onto a neighbourhood. Fix with small. Define . Then is a contraction on (same constant ), and if is small enough, maps to itself. By the Banach fixed point theorem, has a unique fixed point , and means .

Injectivity. If , then , so .

Smoothness of the inverse. By the chain rule, if is differentiable, then . It remains to show is differentiable, which follows from the fact that is and is continuous and invertible in a neighbourhood of .

In the visualisation above, the source panel (left) shows a small disk around the base point. The target panel (right) shows the image of that disk under . When the base point is away from the origin, and the image is a well-defined region (local diffeomorphism). As you drag the point towards the origin, and the image collapses, visualising the failure of invertibility.

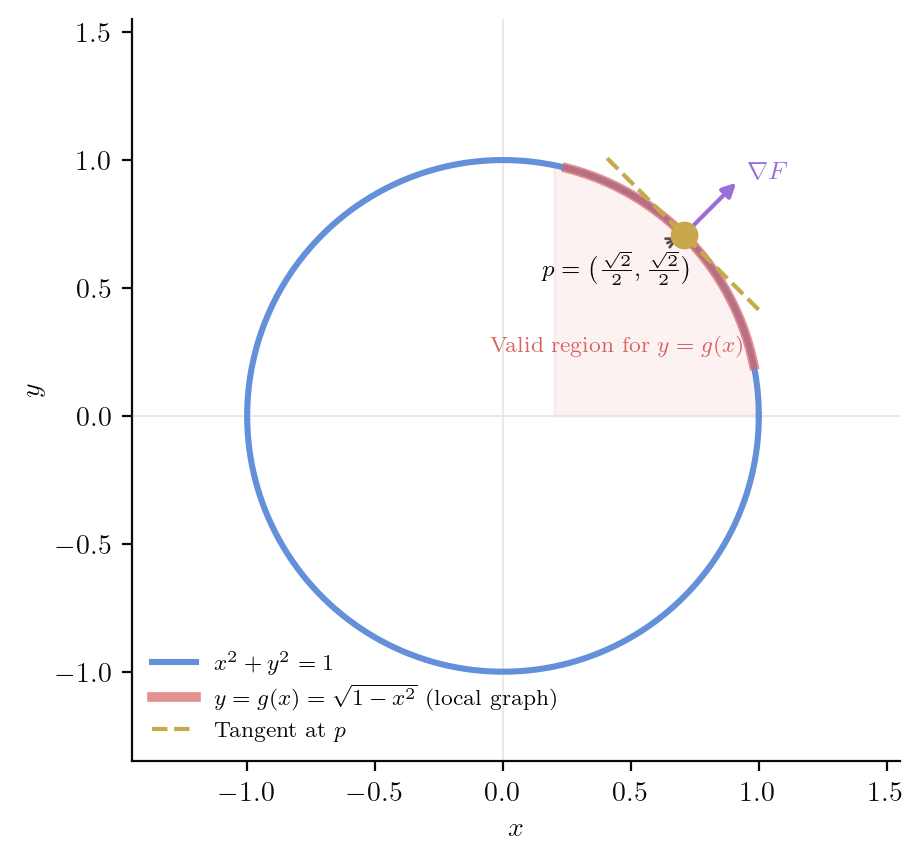

9. The implicit function theorem

The implicit function theorem tells us when the level set can be locally written as a graph .

Let be open and be . Suppose and the partial derivative (the matrix of partial derivatives with respect to the last variables) is invertible.

Then there exist open sets in and in and a unique function such that

Moreover, .

Proof idea. Define . Then is invertible (its determinant is ). Apply the inverse function theorem to .

Proof. Define by . Then

which is invertible since is invertible. By the inverse function theorem, is a local diffeomorphism near . Let be the local inverse. Write (the first component of is since fixes the first coordinates).

Now , so . Define . Then , so .

The formula for follows by differentiating with respect to using the chain rule:

Consider . At the point , we have and . By the implicit function theorem, near this point , and

from sympy import *

x, y = symbols('x y')

F = x**2 + y**2 - 1

# Check conditions at (sqrt(2)/2, sqrt(2)/2)

a, b = sqrt(2)/2, sqrt(2)/2

print(f"F({a}, {b}) = {F.subs([(x, a), (y, b)])}")

print(f"dF/dy at ({a}, {b}) = {diff(F, y).subs([(x, a), (y, b)])} (nonzero ✓)")

# Implicit function: solve F = 0 for y > 0

g = sqrt(1 - x**2)

print(f"\nImplicit function: g(x) = {g}")

print(f"g'(x) = {diff(g, x)}")

print(f"g'({a}) = {diff(g, x).subs(x, a)} = {simplify(diff(g, x).subs(x, a))}")

# Verify formula: g'(x) = -F_x / F_y

formula = -diff(F, x) / diff(F, y)

print(f"\n-F_x/F_y = {simplify(formula)}")

print(f"Matches g'(x): {simplify(formula - diff(g, x)) == 0} ✓")Verification: implicit function theorem for the unit circle

Looking ahead. The implicit function theorem is how manifolds are defined: a smooth -dimensional manifold can be locally described as the level set of a smooth function with surjective derivative. The implicit function theorem guarantees that each such level set is locally a graph. In Part III, we will return to this idea when we define smooth manifolds and their tangent spaces.

References

- M. Spivak, Calculus on Manifolds: A Modern Approach to Classical Theorems of Advanced Calculus, W.A. Benjamin, 1965. DOI

- J.R. Munkres, Analysis on Manifolds, Addison-Wesley, 1991. DOI

- L.W. Tu, An Introduction to Manifolds, 2nd ed., Springer, 2011. DOI

- W. Rudin, Principles of Mathematical Analysis, 3rd ed., McGraw-Hill, 1976.

- T.M. Apostol, Mathematical Analysis, 2nd ed., Addison-Wesley, 1974.